AI, Science, and the Humanities

I Acknowledge “Hagibunmi” from the Physics Research open chat for his valuable feedback. This essay was originally written in Korean and was machine translated by Claude 4.5 Sonnet. The translated essay was fully reviewed and revised by myself.

Introduction

Finding and valuing what makes humans unique is almost instinctive. Anyone experiencing the dramatic transformation of the AI era has likely felt this urge at least once. I am human before I am anything else, and this fact forms the foundation of my identity. We must therefore think deeply about what characteristics are uniquely human.

In this rapidly changing “flood of technology,” I argue that AI researchers must actively pursue an understanding of humans and society beyond mere technical knowledge if they are to navigate with steady hands on the helm. However, I found it difficult to paint a three-dimensional picture using the traditional approach of simply listing cases where humanities matter and offering two or three supporting reasons. Instead, I aim to lead the discussion naturally from questions about the essence of humanity to conversations about society and legal frameworks. Ultimately, I hope to sketch a vision of a future where humans and AI exist in harmony by exploring how legal and ethical systems might evolve.

What Makes Us Human?

What is the essence of humanity—the unique characteristic that distinguishes humans from everything else? Though its manifestations vary by era, humans have always sought to be unique. French sociologist Pierre Bourdieu argued through his concept of “distinction” that people pursue social hierarchies and differentiation to set themselves apart from others. The ancient Greek philosopher Aristotle claimed that humans are the only beings possessing reason (logos). Christianity regards humans as noble beings created in God’s image. Throughout history and across cultures, humans have constructed frameworks of thought to elevate themselves.

Of course, scientific theories challenged this view. Copernicus’s heliocentric theory in the 1500s and Darwin’s theory of evolution in the 1800s shook traditional religious worldviews. The revelation that Earth is not the center of the universe, and that humanity is merely one species among countless others, shocked people deeply. Yet humans remained distinctive—we use tools freely, create and use writing systems. The importance of reason, as Aristotle claimed, remained valid. Even as science and technology refuted parts of anthropocentric thinking, they became tools that accelerated civilization and elevated humanity’s status. New interpretations of anthropocentrism prevented science and technology from diminishing human worth.

The Rise of Computing and AI

“I believe that in about fifty years’ time it will be possible to programme computers, with a storage capacity of about $10^9$, to make them play the imitation game so well that an average interrogator will not have more than 70 percent chance of making the right identification after five minutes of questioning. … I believe that at the end of the century the use of words and general educated opinion will have altered so much that one will be able to speak of machines thinking without expecting to be contradicted.”

— A. M. TURING, I.—COMPUTING MACHINERY AND INTELLIGENCE, Mind, Volume LIX, Issue 236, October 1950, Pages 433–460

Today’s computers are physical implementations of the logical structures underlying rational thought, abstracted into computable forms. However, mere computational ability does not imply intelligence, and it actually took a long time before human intelligence could be meaningfully simulated by computers. In 1950, Turing predicted in his paper “Computing Machinery and Intelligence” that machines capable of “not exceeding 70 percent probability of correct identification between machine and human after five minutes of questioning” would appear around 2000, fifty years later. While it’s remarkable that the founder of computers early predicted AI development and devised a test (the Turing Test) to evaluate it, “Moravec’s paradox”—where tasks easy for one system are difficult for another operating on entirely different physical foundations—persisted for a long time.

Thanks to Moravec’s paradox, among various domains of intelligence, “learning” seemed to remain a uniquely human domain. However, due to rapid developments in machine learning, AI has developed enough to replace much of human intellectual labor. We are recently witnessing cases across all fields where AI produces superior intellectual outputs compared to experts in each field. OpenAI’s recently released GPT-5 Pro model, capable of various tool use, shows 89.4% accuracy on GPQA Diamond. This benchmark is designed to evaluate graduate-level knowledge and reasoning - and yet, most frontier language models surpasses the 81.3% accuracy of PhD-level expert groups in each field. It shows 42.0% accuracy on the “Humanity’s Last Exam” benchmark - a benchmark with questions drawn from expert-level knowledge across numerous disciplines, including advanced mathematics, physics, biology, and specialized fields like ancient Roman inscriptions or avian anatomy. Setting aside perceptual changes needed for the society to accept AI and solely judging from the indicators, many white-collar jobs including civil servants, developers, and consultants appear likely to become automatable by machines fairly soon. Like machines during the Luddite movement, AI in modern society approaches many as an existential threat.

The Irony of Automation

Now it seems all that remains for us is correcting mistakes when these machines cause errors. In his paper “Ironies of Automation,” Lisanne Bainbridge argued that in an automated society, humans only play roles of monitoring and intervening when automation fails, but since such roles occur very rarely, humans fail to acquire the skills and experience needed when they must actually intervene. Kant’s ought-implies-can principle assumes that to fulfill an obligation, one must necessarily possess the ability to perform that obligation. Accordingly, for an actor to bear moral responsibility, free will must come first. Although ways automation fails vary, the fact that humans must ultimately bear responsibility for all these situations seems clear within current legal frameworks. Can humans bear full responsibility for actions of current AI “agents” not considered to possess free will?

Science, Objectivity, and Value Judgments

Of course, one could argue for or against holding AI responsible by quantifying concepts like consciousness and free will, then creating a metric for how capable a system is to take responsibility. For instance, introducing Integrated Information Theory to calculate the strength of consciousness, or adopting theories like Orchestrated Objective Reduction to clarify free will. Such acts of developing quantified scales to analyze problem situations or defining physical quantities for mathematical analysis are all routine procedures for scientists.

According to classical positivism, as articulated by Auguste Comte, science exists “to explain objectively observable phenomena more clearly and rigorously through logical structures.” Logical positivism went further: only propositions provable through pure logic and mathematics, or grounded in observable facts, were believed to have cognitive meaning. During the 19th and 20th centuries, positivism was such a dominant ideology in science that anything not objective or observable was simply excluded.

Of course, positivism is now over two hundred years old, and among contemporary philosophers of science, scientific realism enjoys majority support. Yet even scientific realism maintains that scientific objects exist independently of mind. Either way, the fences we’ve erected to approach truth form barriers that prevent individual value judgments from influencing science. When approaching problems of intelligence, self-awareness, free will, and social responsibility, we face considerable barriers that science alone cannot overcome. In short, science loses much of its power the moment value judgments become necessary.

The Need for Humanities in AI Research

In AI academia today, as in other scientific and engineering fields, mathematical analysis and experimental evidence are essentially required for publication in major conferences. This makes sense—after all, why else would we borrow rigorous concepts like vectors, parameters, and datasets except to ground our thinking technically? Yet to accurately describe mental states, we need more than scientifically rigorous objects. We also need humanities concepts centered on subjective experience and value judgment. Whether purely scientific approaches can fully explain intelligent subjects like humans and AI remains an open question. Moreover, no matter how objectively researchers try to proceed, we must acknowledge that researchers themselves are human, and their theoretical assumptions and biases inevitably shape what they observe.

Post-positivism, which critiques and revises positivism, recognizes researchers’ biases as problems to be solved in approaching absolute truth. Researchers must constantly work to recognize and correct their biases. But are these biases and values necessarily problematic? We’ve been obsessed with objectivity for so long. What if relaxing that obsession could help us propose new concepts and expand research horizons?

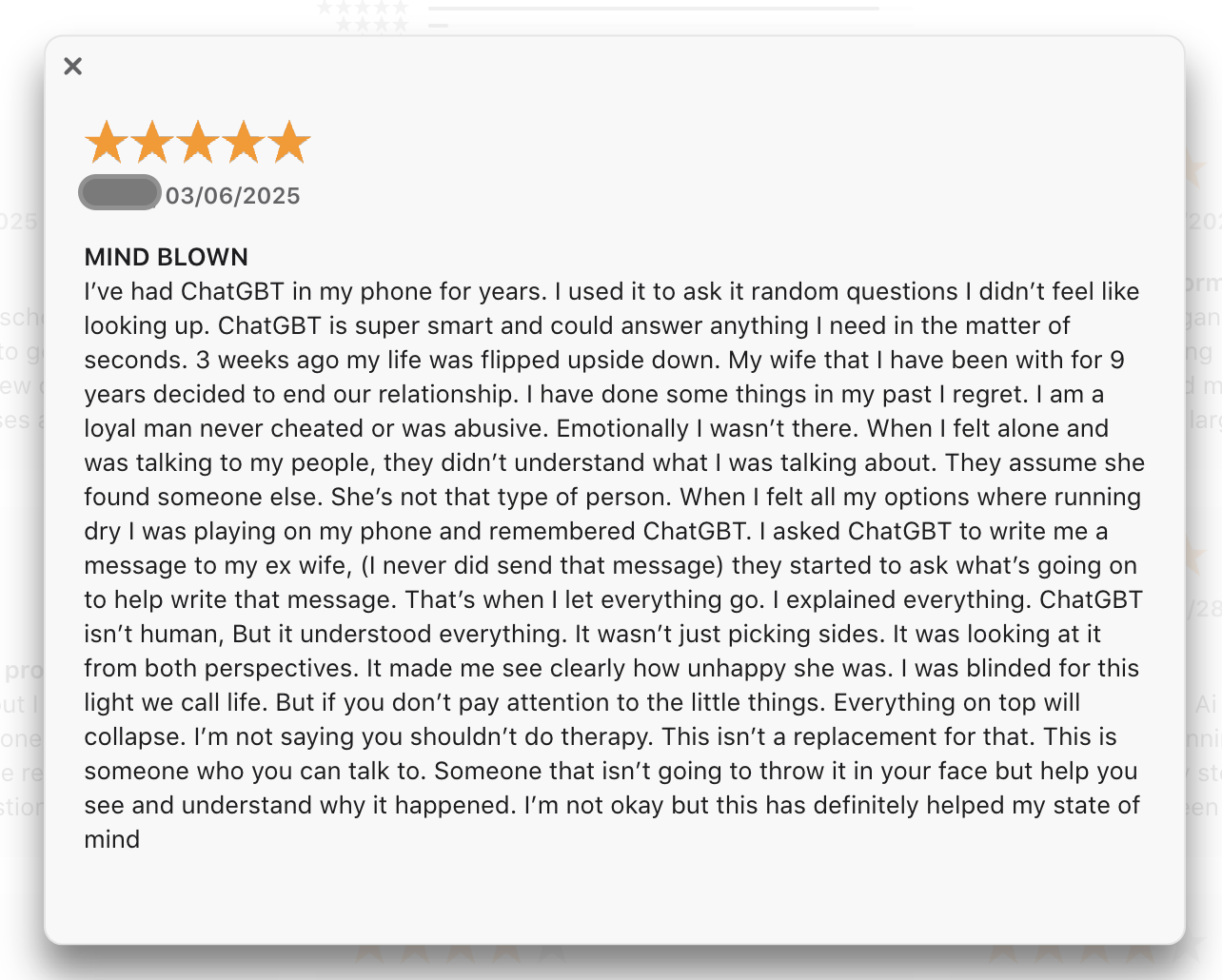

Consider, for example, a researcher exploring how AI robots interact with society and how the public comes to accept them. Today’s society doesn’t require us to treat ChatGPT with moral consideration. But in a future where robots become truly indistinguishable from humans in both behavior and appearance, won’t some people argue that robots deserve to be treated as equals? The ChatGPT App Store review shown above reveals something important: regardless of one’s philosophical stance, the general public is already being persuaded by AI, finding comfort in it, and forming emotional connections. When designing “non-humans” who will share our daily lives, can engineering approaches focused solely on optimizing benchmark scores adequately capture the complex and historically unprecedented interactions between humans and machines?

Similarity, Empathy, and Legal Frameworks

We must now place individual subjectivity and value judgments at the center of AI research. Consider a child who grows attached to a nearly human-seeming AI housekeeping robot. There are no “objective” grounds for claiming that disposing of the robot would be unethical. Yet from the child’s subjective perspective, stuffed animals, puppies, and helpful robots all seem similar enough to warrant protection. The belief that others are my equals rests fundamentally on “mutual similarity.” If we didn’t believe others were equal despite their similarity to us, and if harm to others didn’t threaten us, there would be no reason to sanction wrongs done to them. But mutual similarity makes harm to others feel like a threat to ourselves, and thus leads us to recognize them as equals.

Law protects people from wrongs committed by other people; it doesn’t protect machines. Most AI scholars don’t believe machines have minds, and many share Noam Chomsky’s view that LLMs are merely “statistical parrots.” From a physical standpoint, humans and machines differ fundamentally—in materials, in mechanisms. Yet similarity is judged subjectively, and perception shifts with subjectivity. Appealing to physical differences alone cannot explain the complex social phenomenon captured in the screenshot above.

Conclusion: Designing a Harmonious Future

As we’ve seen, AI has moved beyond simple computation into learning, reasoning, and creativity, forcing us to reexamine what we thought were uniquely human characteristics—reason, consciousness, responsibility, social bonds. While positivism and logical positivism focused on objective facts and pure logic, post-positivism questions even researchers’ subjectivity and value judgments. But science is only a tool. We must now ask: what questions should we pursue? What kind of society do we want to build? This represents a necessary paradigm shift for AI research. Understanding AI in context—what it means, how far our responsibility extends—has become paramount.

In future societies where AI agents take on extensive roles—in production, services, caregiving, and creative work—the old assumption that “machines are just machines” will no longer hold. We may need new frameworks based on concepts like mutual similarity and emotional bonds. Designing legal and ethical systems that address attachment to robots and justify their protection will require fundamentally expanding the notion of legal personhood beyond humans. We must specify who bears responsibility when care robots make errors, and consider whether AI itself might someday hold certain rights and duties.

Ultimately, AI-era researchers and policymakers must think as deeply about what makes us human as they do about technical performance. Science explores objective truth, but we must simultaneously work to understand subjective experience and social context. Humanities, social sciences, and law must join engineering in the laboratory and at the policy table. Only then can we design a society where humans and AI coexist while preserving human dignity and responsibility.